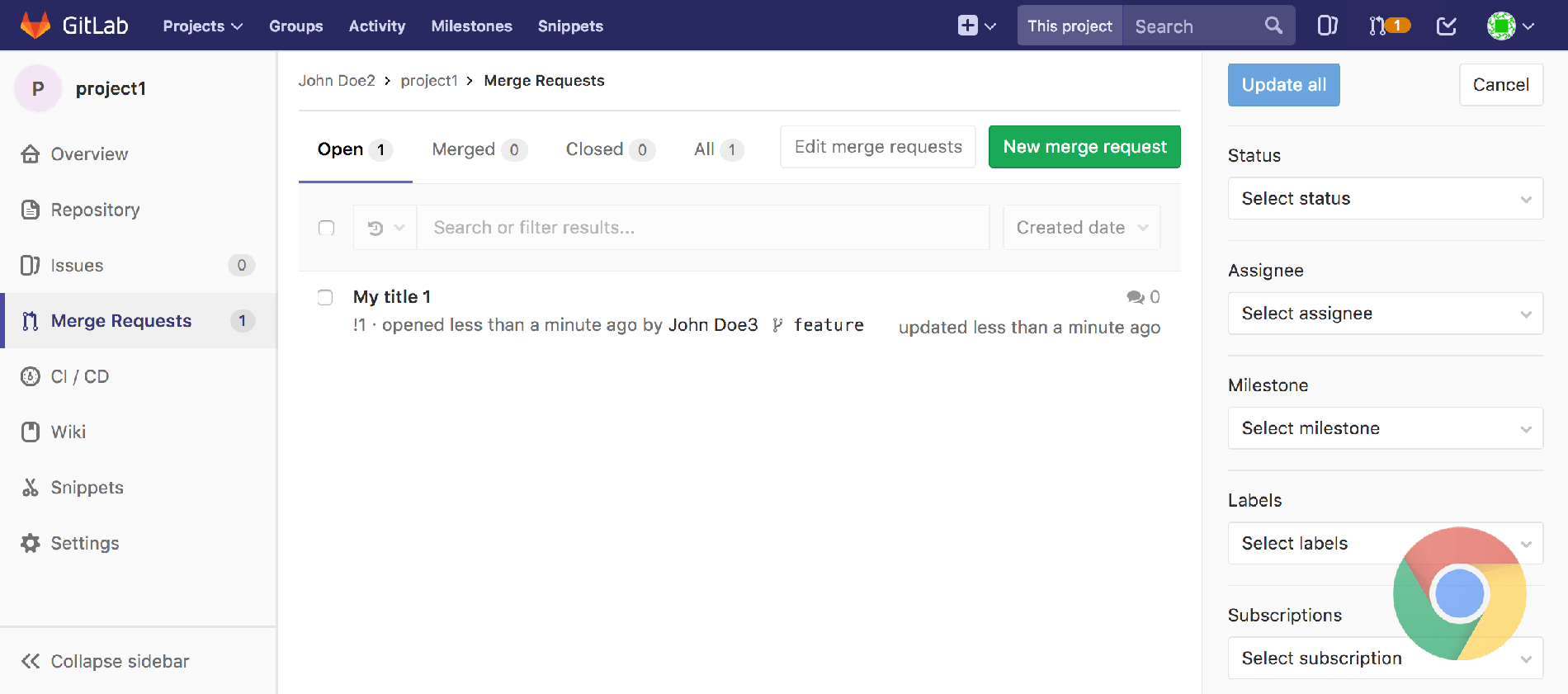

Where the key part that gives it away is HeadlessChrome. The default user agent when running Chrome in headless mode will be something like Mozilla/5.0 (X11 Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) HeadlessChrome/.50 Safari/537.36 This is pretty much the only one of the proposed tests that can legitimately identify Chrome Headless, but it’s also the most trivial to bypass. Hopefully, you’ll find the techniques proposed here interesting whether you’re doing some web scraping of your own or just curious to see how it’s done.

These won’t of course make Chrome Headless universally undetectable, but any more sophisticated tests can easily be bypassed in similar ways. It only took a few hundred lines of code to make Chrome Headless do better on the tests than standard Chrome! To help emphasize that these sort of tests aren’t actually effective in practice, I decided to go through and show how each one of them can be bypassed. It’s like DRM the best you can hope for is to universally give your users a worse experience so that you can mildly inconvenience the people who will get around any deterrents anyway. You might successfully block Joe Schmoe who disabled the Chromium PDF Plugin due to security concerns, but anybody seriously interested in advertising fraud, blackhat hacking, or– god forbid–web scraping isn’t going to have any difficulty getting around any test that you can come up with. It’s not like any of these tests aren’t trivial to get around anyway. To implement filtering like this in a remotely responsible way would require really knowing what you’re doing and it would involve an immense amount of effort. and you would also need the tests to be version specific and constantly updated. To make something that is legitimately robust, you would realistically need to support a different set of tests for Chrome, Safari, Firefox, Chromium, Opera, Brave, etc. This test might have worked in Chrome 59, but it no longer does in Chrome 60. If you just check a couple of browser configurations and then block content based on that then there’s no question that you are going to be blocking people unintentionally.Īs an example, one of the proposed tests–the one that the author called his “most robust”–was that the dimensions of a broken image not be equal to 0x0. None of us would be able to visit a website that naïvely implemented the proposed set of tests and blocked content based on them.Ĭheck for yourself and see if you would be blocked as well. I sent the same test to a handful of friends on different platforms and every single one failed at least one of these. To illustrate this point, I implemented all of the tests proposed in Detecting Chrome Headless and, unsurprisingly, my standard everyday browser failed some of the tests.

That’s always a fun debate to get into, but the thing that I really took issue with about the article was that it implicitly promoted the idea of blocking users based on browser fingerprinting.Īs far as I’m concerned, this is generally a terrible idea and you’re far more likely to block and frustrate your users than to provide any sort of meaningful deterrent to those who you are trying to block.

Most of the discussion on Hacker News was focused around the author’s somewhat dubious assertion that web scraping is a “malicious task” that belongs in the same category as advertising fraud and hacking websites. A short article titled Detecting Chrome Headless popped up on Hacker News over the weekend and it has since been making the rounds.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed